Claude Wrote a Working FreeBSD Root Exploit in 4 Hours

Highlights of AI News for March 30 - April 05 2026

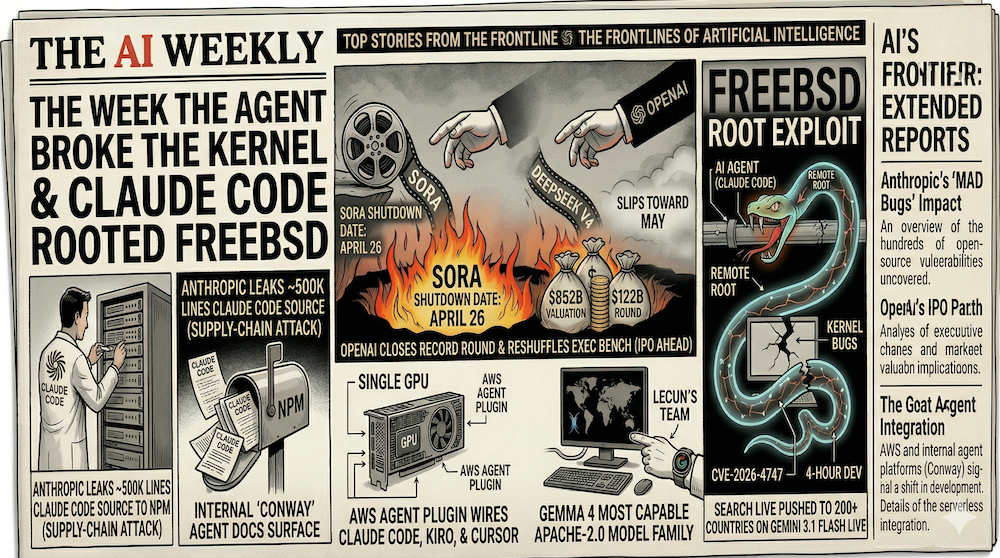

Week in Review | The week the agent broke the kernel: Claude Code autonomously wrote a working remote root exploit for FreeBSD (CVE-2026-4747) in four hours as part of Anthropic's MAD Bugs month, which has already surfaced 500+ zero-days in OSS. OpenAI closed a record $122B round at an $852B valuation and reshuffled its exec bench ahead of a possible Q4 IPO. Google made Gemma 4 the most capable Apache-2.0 open model family yet, and pushed Search Live to 200+ countries on Gemini 3.1 Flash Live. Anthropic also leaked ~512K lines of Claude Code source to npm during a coordinated supply-chain attack, while internal docs on its always-on "Conway" agent platform surfaced. OpenAI confirmed an April 26 shutdown date for Sora; DeepSeek V4 slipped again toward May; and AWS shipped an Agent Plugin wiring Claude Code, Kiro, and Cursor into serverless development.

Watch our video: on Youtube or Rumble

The Big Story: An AI Agent Wrote a Working FreeBSD Kernel Exploit in Four Hours

On April 1, security researcher Nicholas Carlini and Anthropic disclosed that Claude Code had independently developed two working remote root exploits for CVE-2026-4747 — a stack buffer overflow in FreeBSD's kgssapi.ko kernel module, which handles Kerberos authentication for the kernel-level NFS server and is reachable over the network on port 2049/TCP. Each exploit succeeded on the first attempt, with Claude's actual working time approximately four hours per run (~8 hours of wall clock including human overhead). The agent autonomously configured a test environment, drove debugging via QEMU, read crash dumps, and constructed ROP chains from available kernel gadgets, with a human researcher providing only 44 prompts of high-level guidance over the entire run. FreeBSD's official advisory credits "Nicholas Carlini using Claude, Anthropic."

This is the first public, reproducible demonstration of a frontier agent pivoting from application-level bugs to OS-internal exploitation end-to-end. The result drew immediate attention on Hacker News and was widely covered in security press, including Winbuzzer and NotebookCheck.

And it didn't land in isolation. CVE-2026-4747 is the headline result of MAD Bugs (Month of AI-Discovered Bugs), an initiative running through the end of April during which the same Claude-powered pipeline has already surfaced 500+ validated high-severity zero-days in production open-source software. Adversa AI's April security roundup places it as the single most important agentic-cyber milestone of the year so far.

Why it matters: "Agentic offensive cyber" stopped being a hypothetical this week. The same model class that can autonomously develop a working kernel exploit in four hours is now shipping inside developer toolchains used at scale — and as the next story shows, those toolchains are themselves a priority target. The asymmetry between offense and defense just got measurably worse, and the disclosure window between vulnerability discovery and weaponization is collapsing on both sides.

OpenAI Closes $122B Round at $852B — and the Exec Bench Reshuffles for an IPO

On March 31, OpenAI closed a $122 billion funding round at an $852 billion post-money valuation — the largest private raise in history. SoftBank co-led the round alongside Andreessen Horowitz, D.E. Shaw, MGX, TPG, and T. Rowe Price. Amazon committed $50 billion (with $35B contingent on either an IPO or an AGI achievement milestone), while NVIDIA and SoftBank each put in $30 billion. Roughly $3 billion came from individual retail investors via bank channels — an unusual move for a still-private company. OpenAI is now generating about $2 billion per month in revenue, with business revenue climbing to ~40% of the mix.

Days later, the executive bench started moving. According to PYMNTS on April 5, COO Brad Lightcap is shifting to a "special projects" role focused on enterprise AI adoption, with former Salesforce exec Denise Dresser (who joined OpenAI as CRO) inheriting most of his responsibilities. CMO Kate Rouch is stepping down to address health issues, and Applications chief Fidji Simo is on temporary medical leave. The Motley Fool's pre-IPO briefing — published the same day — places a possible OpenAI IPO as soon as Q4 2026 at a potential $1 trillion valuation, with projected revenue of $280 billion by 2030 and ChatGPT at 900 million weekly active users.

Why it matters: The $122B isn't really the story — it's the pre-IPO runway. Investors are underwriting a company that plans to go public at trillion-dollar scale within a year, and OpenAI is reorganizing its leadership to look like a public company while it still can. The contingent structure on Amazon's investment is the most interesting tell: it ties $35B of capital to either public markets or a definition of AGI being met, transforming abstract AGI timeline debates into concrete venture capital mechanics.

Google Search Live Goes Global on Gemini 3.1 Flash Live

On March 26, Google announced Gemini 3.1 Flash Live, its highest-quality real-time audio and voice model, then immediately used it to take Search Live global. By April 1, Search Live was live in 200+ countries and territories — every market where AI Mode already existed — with multimodal voice and camera input in the user's preferred language. This isn't a beta. It's the entire planet, shipped at once.

Gemini 3.1 Flash Live also powers a sweeping Gemini Live upgrade that 9to5Google called the model's "biggest upgrade yet" — better acoustic nuance recognition, lower latency, more aggressive background-noise filtering, and 90+ language support. The same model is now the backbone of Gemini Live, Search Live, and the wave of Workspace updates that landed in the same window.

Why it matters: Google's thesis is unambiguous: AI-powered, multimodal, real-time search is the product. The competitive moat isn't model quality alone — it's the one thing OpenAI and Anthropic can't replicate overnight, which is global distribution. ChatGPT still has training-cutoff awkwardness, Claude can't natively browse, Perplexity is a fraction of the scale. Gemini 3.1 Flash Live now sits inside every Google search bar on Earth.

Gemma 4 Ships Under Apache 2.0: The License Change That Reshapes Open-Weights

On April 2, Google DeepMind released Gemma 4 under Apache 2.0 — the first time the Gemma family has shipped under a standard OSI-approved open-source license instead of Google's bespoke terms. The release includes four variants: E2B (2.3B effective params), E4B (4.5B effective), a 26B Mixture-of-Experts with ~4B active params, and a 31B dense flagship. Per Google's main announcement, the 31B instruction-tuned model lands at #3 on the Arena text leaderboard at 1452 Elo, beating models twenty times its size; the 26B MoE clocks in at #6 (1441 Elo). Context windows extend up to 256K, with native vision and audio and 140+ languages out of the box. AI Business confirmed the launch span ranges from edge devices to workstations.

The benchmarks are impressive, but the license is the real news. Apache 2.0 removes the indemnity carve-outs and use-case restrictions that kept enterprise legal teams from deploying previous Gemma versions in production. Combined with Meta's gradual retreat from truly-open Llama and Mistral's slide toward commercial licensing, Gemma 4 becomes the most capable and most permissive open-weights family available today. Google's open source blog notes that community downloads of Gemma have already crossed 400 million, with developers creating over 100,000 variants — a base that just got handed Apache rights.

Why it matters: For anyone building on-prem, edge, or regulated deployments, the calculus changed overnight. The frontier-quality / permissive-license / vision-and-audio combination didn't exist a week ago. Expect a wave of enterprise migrations and a near-immediate flood of fine-tunes.

Anthropic's Other Bad Day: 512K Lines of Claude Code Source Leak to npm

The same week Anthropic was demonstrating frontier offensive-cyber capabilities, its own supply chain became the cautionary tale. On March 31, Anthropic accidentally shipped the entire Claude Code TypeScript source to the public npm registry — roughly 512,000 lines across ~1,900 files — via a single misconfigured .npmignore and a 59.8 MB JavaScript source-map file in version 2.1.88 of the @anthropic-ai/claude-code package. Bleeping Computer reported that within hours the codebase had been mirrored across GitHub and analyzed by thousands of developers; VentureBeat called it the largest accidental disclosure of an AI agent harness to date. The root cause was prosaic: Claude Code is built on Bun (which Anthropic acquired in late 2025), Bun generates source maps by default, and someone on the release team failed to add *.map to .npmignore.

The source leak was the less dangerous half of the day. In the same window — between 00:21 and 03:29 UTC on March 31 — a coordinated supply-chain attack injected a malicious axios dependency into the same package, exposing users who installed Claude Code during that ~3-hour window to a cross-platform Remote Access Trojan. Anthropic's guidance: treat any machine that pulled in that window as compromised, immediately downgrade, and rotate all secrets. Check Point Research followed up with two CVEs documenting how malicious project files could trigger remote code execution and API-token exfiltration through Claude Code's configuration parsing.

Why it matters: The juxtaposition with the FreeBSD result is the uncomfortable part. The same model class that can autonomously develop a working kernel exploit in four hours is shipped through a developer toolchain that just demonstrated, in production, that supply-chain attacks on AI tooling are now a priority target — and that even a deeply security-conscious vendor can ship 512K lines of proprietary source by accident. Anthropic's rapid disclosure and patch cadence matter, but the window between vulnerability and weaponization is narrowing on both sides of the fence.

"Conway" Leaks: Anthropic's Bet on an Always-On Agent Platform

Also on April 1, TestingCatalog revealed that Anthropic is internally testing Conway, an always-on agent platform that turns Claude into a persistent autonomous environment rather than a request-response chatbot. Follow-up reporting from Dataconomy and TechBriefly filled in the architecture: Conway is built around an Extensions area where users install custom tools, UI tabs, and context handlers, with a new .cnw.zip package format, public webhook URLs that can wake the instance, native Chrome integration, and notifications. It can run Claude Code under the hood. TestingCatalog also references Epitaxy, a related interface possibly designed to operate the environment.

If the leaks are accurate, Conway is Anthropic's direct answer to OpenAI's ambitions for long-running agentic products — and a strategic move to turn Claude from a model you query into a surface you build on. Pair it with MCP now crossing 97 million installs (as called out in this week's AWS roundup), and Anthropic's play starts to look less like "ship a better model" and more like "own the agent runtime."

Why it matters: Conway is the platform layer that makes the FreeBSD result deployable. An always-on agent with webhooks, browser access, and custom tool extensions is exactly the substrate you need to operationalize Claude Code's autonomous capabilities — for builders and for attackers.

OpenAI Pulls the Plug on Sora: The Free Generative Video Honeymoon Is Over

OpenAI confirmed this week that the Sora app will be discontinued on April 26, 2026, with the Sora API following on September 24, 2026. Sam Altman explained the decision in early April: peak global DAUs collapsed from ~1M to under 500K, the app was burning roughly $1 million per day in inference, and OpenAI is redirecting compute toward its upcoming coding/enterprise model (internally called "Spud"). TechCrunch's analysis reached the same conclusion: Sora was a compute sinkhole, and pixel-level video generation lost the budget war to revenue-positive enterprise tools. Disney — reportedly mid-way through a $1 billion licensing commitment tied to Sora — learned of the shutdown less than an hour before the public.

The lesson is sobering for anyone building generative-video products: even with a billion-dollar studio partner and a million downloads in week one, you cannot outrun inference economics. xAI's earlier move to put Grok Imagine's video generation behind the SuperGrok paywall now looks like the leading edge of a broader shift. Expect RunwayML, Pika, and Luma to follow.

Why it matters: The free-tier generative video era is over. Video is now a paid utility, and the economic model for consumer-grade generative media has converged on subscription-or-die. For builders, the implication is to budget for inference costs from day one and stop treating compute as a growth lever.

DeepSeek V4: Still Missing in Action

DeepSeek V4 was originally penciled in for mid-February. Then late February, then early March, then April per Whale Lab. We're now into April and it still hasn't shipped. The Information reported on April 3 that launch is "imminent within weeks," blaming delays on a rewrite of the training and inference code for Huawei Ascend and Cambricon chips in the wake of U.S. export controls. Polymarket traders are now pricing a May release (~88% for "by May 15") above any April outcome. The leaked specs — per NxCode — describe a trillion-parameter Mixture-of-Experts with native multimodal support, 1M-token context, and SWE-bench scores rivaling proprietary models, but none of that is verifiable until the weights ship.

The delay matters for one reason: DeepSeek's whole pitch is demonstrating frontier-ish quality at a fraction of Western compute cost. Every week they don't ship is a week Gemma 4, GPT-5.4, and Claude Mythos consolidate mindshare at the top of the open- and closed-weights rankings. A May release into a market that now includes Apache-2.0 Gemma 4 is a very different competitive environment than a February release would have been.

AWS Agent Plugin for Serverless: Wiring Claude Code and Cursor Into the Build

On March 30, AWS shipped an Agent Plugin for AWS Serverless, integrating AI assistants like Claude Code, Kiro, and Cursor directly into the build-deploy-debug loop for Lambda, Step Functions, and the rest of the serverless stack. The plugin packages skills, sub-agents, and Model Context Protocol (MCP) servers into modular units that drop into scaffolding at project creation. AWS also announced the 2026 AI & ML Scholars program in the same roundup — free AI education for up to 100,000 learners, with the top 4,500 moving into a fully funded Udacity Nanodegree.

This is part of the broader shift from "AI as chatbot" to "AI as a first-class component of the developer toolchain." For teams already running Claude Code or Cursor on AWS, the plugin dramatically reduces context-switching and lets the agent invoke structured AWS-native capabilities at exactly the moment they're useful — and it's another data point on MCP's ascent from experimental standard to foundational agentic infrastructure.

Research Corner: 3DGS Keeps Maturing

On the research side, the 3D Gaussian Splatting ecosystem continued its shift from novel representation to production-adjacent tooling. ArXiv submissions from March 30–April 4 (visible on the cs.CV recent listing) pushed on two clear frontiers: sparse-view robustness (methods that cluster Gaussians by local geometry and appearance to impose spatial priors when only a handful of input views are available) and temporal consistency for dynamic scenes, following the 4D-GS and Deformable 3DGS line. The community-curated Awesome Gaussians repo tracks the full week-by-week submission cadence.

We covered this trajectory in depth this week. Our trend tutorial on Marble-like architectures digs into the architectural patterns behind world-model–class systems; our 3DGS basics primer explains the math and engineering of Gaussian splatting from first principles; and our Image-to-3D landscape guide maps the modern image-to-3D ecosystem from LRM-style direct regression through multi-view diffusion, latent 3D generation, and the dimensional jump from static 3D to dynamic 4D worlds. For hands-on learners, we also shipped four pure-NumPy notebooks: 3DGS from Scratch, Image to Gaussians, Novel View Synthesis, and Multi-View Aggregation — the full pipeline from a single image to a fused multi-view scene.

Zoomed out, this is the exact trajectory NeRF took in 2022–2023: from clever idea, to ecosystem of tricks, to reliable backbone. For image-to-3D builders, the frontier isn't raw per-pixel rendering quality anymore — it's consistency under sparse supervision and across time.

By the Numbers

- $122 billion — OpenAI's funding round, the largest private raise in history.

- $852 billion — OpenAI's post-money valuation after the round closed on March 31.

- $2 billion / month — OpenAI's current revenue run rate, ~40% of which is now business revenue.

- $50 billion — Amazon's commitment to OpenAI, with $35B contingent on IPO or AGI.

- ~4 hours — Time Claude Code took to autonomously develop a working FreeBSD kernel exploit (CVE-2026-4747).

- 44 prompts — Total human guidance over the entire 8-hour wall-clock FreeBSD exploit run.

- 500+ — Validated high-severity zero-days surfaced by the MAD Bugs pipeline in a single month.

- ~512,000 — Lines of Claude Code TypeScript source accidentally leaked to npm on March 31.

- ~1,900 — Files in the Claude Code source leak.

- ~3 hours — Window during which a malicious axios dependency was injected into the Claude Code npm package (00:21–03:29 UTC, March 31).

- 200+ — Countries and territories where Google Search Live is now available, powered by Gemini 3.1 Flash Live.

- 31B — Parameters in Gemma 4's flagship dense model, ranked #3 on the Arena text leaderboard at 1452 Elo.

- 256K — Gemma 4's maximum context window.

- 140+ — Languages supported natively by Gemma 4.

- 400 million — Cumulative community downloads of Gemma models since launch.

- April 26, 2026 — Sora app shutdown date.

- $1 million / day — Sora's reported inference burn rate before shutdown.

- 97 million — Cumulative MCP installs as of March 2026.

- 100,000 — Learners targeted by the 2026 AWS AI/ML Scholars program (top 4,500 funded for a Udacity Nanodegree).

- ~88% — Polymarket-implied odds DeepSeek V4 ships by May 15.

What to Watch Next Week

- DeepSeek V4 release — The Information says "imminent within weeks." A May release into a Gemma-4-Apache-2.0 market is a very different competitive environment than the original February target would have been.

- Conway public reveal — Anthropic has not officially confirmed Conway, but the level of detail in the leak suggests a launch is close. Watch for an Extensions developer preview and the first third-party

.cnw.zippackages. - More MAD Bugs zero-days — The initiative runs through the end of April, with new disclosures every few days. Expect at least one more headline-grade CVE in W15.

- OpenAI "Spud" release timeline — Sora was killed to free compute for Spud. Sam Altman has said "a few weeks" since late March. If Spud lands in W15, it will be the first major OpenAI model post-Sora and the first capability test of the new compute allocation.

- Sora's actual sunset (April 26) — Watch for talent movement from OpenAI's video team and any new funding announcements at Pika, RunwayML, Luma, and LTX as the de facto post-Sora video options.

- Gemma 4 ecosystem reaction — How fast do enterprise teams migrate from Llama and Mistral? Track Hugging Face downloads, fine-tune releases, and the first Apache-2.0 production deployments.

- Claude Code supply-chain fallout — Expect ongoing incident-response disclosures from teams that pulled the trojanized version on March 31, plus a broader conversation about npm/PyPI hardening for AI developer tools.

- Anthropic Mythos official launch — Confirmed in early-access testing per last week's leaks. An official release could come any day; the leaked cybersecurity benchmarks suggest a significant capability jump above Opus 4.6.

All References

- MAD Bugs: Claude Wrote a Full FreeBSD Remote Kernel RCE with Root Shell (CVE-2026-4747) — Calif.io Blog (April 1, 2026)

- CVE-2026-4747 Technical README — Calif Publications (April 2026)

- Claude wrote a full FreeBSD remote kernel RCE with root shell — Hacker News discussion (April 2026)

- Anthropic's Claude AI Writes Full FreeBSD Kernel Exploit in Four Hours — Winbuzzer (April 1, 2026)

- Claude Code cracks FreeBSD within four hours — NotebookCheck (April 2026)

- Top Agentic AI Security Resources — April 2026 — Adversa AI (April 2026)

- OpenAI, not yet public, raises $3B from retail investors in monster $122B fund raise — TechCrunch (March 31, 2026)

- OpenAI raises $122 billion in record funding round, IPO plans expected — American Bazaar (April 1, 2026)

- OpenAI raises a record $122 billion as revenue crosses $2 billion per month — CoinDesk (April 1, 2026)

- OpenAI Operations Chief Changes Jobs Amid IPO Preparations — PYMNTS (April 5, 2026)

- 5 Things to Know About OpenAI Before Its IPO — The Motley Fool (April 5, 2026)

- Gemini 3.1 Flash Live: Making audio AI more natural and reliable — Google Blog (March 26, 2026)

- Google Takes Search Live Global With Gemini 3.1 Flash Live — Search Engine Journal (March 26, 2026)

- Gemini Live gets its 'biggest upgrade yet' with Gemini 3.1 Flash Live — 9to5Google (March 26, 2026)

- Gemma 4: Expanding the Gemmaverse with Apache 2.0 — Google Open Source Blog (April 2, 2026)

- Gemma 4: byte for byte, the most capable open models — Google Blog (April 2, 2026)

- Google releases Gemma 4 under Apache 2.0 — and that license change may matter more than benchmarks — VentureBeat (April 2, 2026)

- Google Launches Open Model Family Gemma 4 — AI Business (April 2, 2026)

- Claude Code Source Leaked via npm Packaging Error, Anthropic Confirms — The Hacker News (April 2026)

- Claude Code source code accidentally leaked in NPM package — Bleeping Computer (April 2026)

- Claude Code's source code appears to have leaked: here's what we know — VentureBeat (April 2026)

- RCE and API token exfiltration through Claude Code project files (CVE-2025-59536, CVE-2026-21852) — Check Point Research (April 2026)

- Exclusive: Anthropic tests its own always-on "Conway" agent — TestingCatalog (April 1, 2026)

- Anthropic Tests Conway As A Persistent Agent Platform For Claude — Dataconomy (April 3, 2026)

- Anthropic explores extension-based agent system with Conway — TechBriefly (April 3, 2026)

- OpenAI sets two-stage Sora shutdown with app closing April 2026 and API following in September — The Decoder (March 28, 2026)

- Sam Altman explains why OpenAI discontinues Sora — TechBriefly (April 2, 2026)

- Why OpenAI really shut down Sora — TechCrunch (March 29, 2026)

- DeepSeek V4 And Tencent's New Hunyuan Model To Launch In April — Dataconomy (March 16, 2026)

- DeepSeek V4 (2026): 1T Parameters, 81% SWE-bench, $0.30/MTok — Full Specs — NxCode

- DeepSeek V4 release odds — Polymarket

- AWS Weekly Roundup: AWS AI/ML Scholars program, Agent Plugin for AWS Serverless, and more (March 30, 2026) — AWS News Blog (March 30, 2026)

- ArXiv cs.CV recent listings — arXiv

- Awesome Gaussians — community-curated 3DGS paper list

- Inside Marble-Like Architectures: A Trend Tutorial — Artifocial (April 2, 2026)

- 3DGS Explained: From Foundations to Applications — Artifocial (April 3, 2026)

- The Image-to-3D Landscape: How One Photo Becomes a World — Artifocial (April 5, 2026)

- Notebook 00 — 3DGS from Scratch — 2026-W14 Artifocial Tutorials

- Notebook 01 — Image to Gaussians — 2026-W14 Artifocial Tutorials

- Notebook 02 — Novel View Synthesis — 2026-W14 Artifocial Tutorials

- Notebook 03 — Multi-View Aggregation — 2026-W14 Artifocial Tutorials

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content