Self-Play for LLM Self-Evolution: From Brittle Dynamics to Sustained Improvement

AI Tutorials - The trend of Self-Play

W11 Trend Tutorial · Advanced (ML Practitioner) · March 2026

Research Area: Recursive Self-Improvement (RSI)

Companion Notebooks — released progressively, links activated as each goes live:

| # | Notebook | Focus | Compute |

|---|---|---|---|

| 00 | 00_self_play_toy_example.ipynb | Toy self-play from scratch — see the core loop in action | CPU only |

| 01 | 01_self_play_fundamentals.ipynb | Self-play fundamentals with a real LLM | GPU |

| 02 | 02_self_training_star_rest.ipynb | STaR/ReST self-training loops | GPU |

1. Why This Matters Now

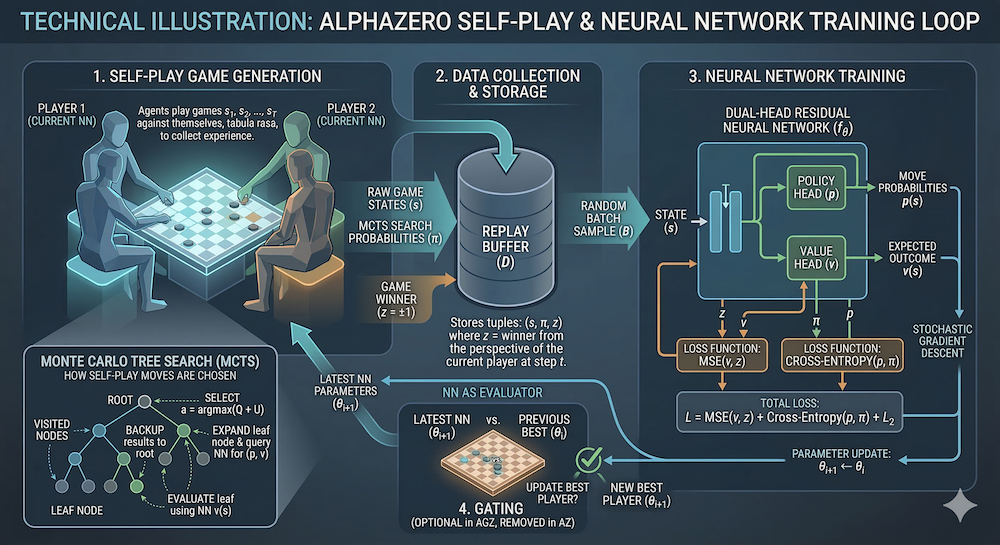

Self-play has driven some of AI's biggest breakthroughs — AlphaGo, AlphaZero, and OpenAI Five all relied on agents playing against themselves to exceed human performance. But translating self-play from games (with clear win/loss signals) to language models (with fuzzy, open-ended objectives) has been an open challenge.

Two papers published in February 2026 represent a significant shift: they formalize when and why self-play works for LLMs, and reveal a surprising theoretical connection between self-play fine-tuning and adversarial imitation learning. Together, they provide a principled framework for building self-evolving language models — systems that improve without requiring new human-annotated data.

This matters directly for foundation model research because it opens a path toward training-compute-efficient improvement loops that could complement or replace expensive RLHF pipelines.

2. Paper 1: The Proposer/Solver/Verifier Framework

Paper: Self-Play Only Evolves When Self-Synthetic Pipeline Ensures Learnable Information Gain (arXiv:2603.02218, February 2026)

Core Insight

Self-play for LLMs often fails. Models generate training data from their own outputs, but this data can be too easy (no learning signal), too hard (model can't learn from it), or degenerate (mode collapse). The key contribution of this paper is identifying three distinct functional roles that must be present for self-play to sustain improvement:

| Role | Function | Failure Mode if Missing |

|---|---|---|

| Proposer | Generates tasks/problems at the right difficulty level | Tasks are trivially easy or impossibly hard → no learning signal |

| Solver | Attempts solutions to proposed tasks | Solutions are always correct (too easy) or always wrong (too hard) |

| Verifier | Evaluates solutions and provides training signal | No reliable signal → model trains on noise |

The "Learnable Information Gain" Condition

The paper formalizes a necessary condition for sustained self-evolution. Self-play produces improvement only when the self-synthetic pipeline ensures learnable information gain — meaning:

- The Proposer generates tasks that sit in the Solver's zone of proximal development (tasks the model can't solve yet but has enough capability to learn from)

- The Verifier provides accurate, discriminative feedback (not just binary right/wrong, but signal that guides improvement)

- The difficulty of generated tasks scales with the model's improving capabilities (adaptive curriculum)

Formally, if we denote the model's capability at iteration as , the self-play loop produces improvement only when:

where is a threshold below which the learning signal is too weak to overcome noise in the self-training loop.

Experimental Findings

The authors demonstrate their framework across mathematical reasoning and code generation:

- Sustained improvement: When all three roles are properly calibrated, models show consistent gains over 10+ iterations of self-play (no plateau or collapse)

- Brittle dynamics: Removing or weakening any role leads to one of three failure modes:

- Proposer failure: Tasks become static → model memorizes rather than generalizes

- Solver saturation: Model solves everything the Proposer generates → no gradient signal

- Verifier noise: Incorrect verification introduces "reward hacking" in the training loop

- Curriculum emergence: With a well-calibrated Proposer, task difficulty naturally increases as the model improves — an emergent curriculum without explicit difficulty scheduling

Practical Implications

This framework gives practitioners a diagnostic tool: if your self-play loop stalls, check which of the three roles is the bottleneck. It also suggests that investing in better Verifiers (process reward models, formal verification where possible) may yield more return than scaling the self-play loop itself.

3. Paper 2: Self-Play as Adversarial Imitation Learning

Paper: Your Self-Play Algorithm is Secretly an Adversarial Imitator: Understanding LLM Self-Play through the Lens of Imitation Learning (arXiv:2602.01357, February 2026)

Core Insight

This paper reveals a theoretical equivalence: self-play fine-tuning (SPIN-style methods) is mathematically equivalent to adversarial imitation learning. Specifically, the self-play objective implicitly solves a min-max game:

where is the model's current distribution and is an implicit discriminator. This is structurally identical to the GAN objective, but operating in the space of language model outputs.

Why This Connection Matters

The adversarial imitation framing explains several empirical observations that were previously mysterious:

-

Weak-to-strong improvement without preference data: In standard RLHF, you need human preference annotations. Self-play methods like SPIN show that a weak model can improve by distinguishing its own outputs from reference outputs — the paper shows this works because the model is implicitly learning to "imitate" a stronger distribution through adversarial dynamics.

-

Convergence properties: The min-max formulation inherits convergence guarantees from adversarial imitation learning theory. Under certain regularity conditions, the model converges to the reference distribution — providing a theoretical explanation for why self-play fine-tuning doesn't diverge (when properly set up).

-

Mode collapse prevention: The adversarial lens suggests specific regularization strategies (analogous to GAN training tricks) that can prevent the model from collapsing to a narrow output distribution.

The SPIN Connection

Self-Play Improvement (SPIN), introduced in 2024, showed that an LLM can improve by playing against itself — the model at iteration tries to distinguish its own outputs from reference data, and the model at iteration is trained to fool this discriminator. The new paper proves this is exactly adversarial imitation learning, where:

- The generator (current model) tries to produce outputs indistinguishable from reference data

- The discriminator (previous model iteration) tries to tell apart generated vs. reference outputs

- The training signal comes from this adversarial game, not from explicit human preferences

Key Results

- Models trained with SPIN-style self-play achieve performance comparable to DPO-trained models on standard benchmarks, without requiring any preference annotations

- The adversarial imitation framing predicts (and experiments confirm) that:

- More self-play iterations help up to a point, then marginal returns diminish

- The quality of the reference data matters more than the quantity

- Mixing self-play with a small amount of preference data yields the best results

4. Connecting the Two Papers

These papers are complementary:

| Aspect | Paper 1 (Proposer/Solver/Verifier) | Paper 2 (Adversarial Imitation) |

|---|---|---|

| Focus | When does self-play work? | Why does self-play work? |

| Framework | Diagnostic: identify bottlenecks | Theoretical: prove equivalence |

| Key insight | Need learnable information gain | Self-play = adversarial imitation |

| Practical takeaway | Invest in Verifier quality | Can skip preference data collection |

| Limitation addressed | Explains failure modes | Explains convergence properties |

Together, they suggest a practical recipe for self-evolving LLMs:

- Design the Proposer to generate problems in the model's zone of proximal development

- Build a strong Verifier (process reward model or formal checker where possible)

- Use the adversarial framing to understand convergence — if the model stalls, it may be because the implicit discriminator (previous iteration) is too similar to the generator (current iteration), providing insufficient gradient

- Augment with small amounts of high-quality preference data for the best of both worlds

5. Broader Context: Where Self-Play Fits in the RSI Landscape

Self-play for LLM self-evolution sits at the intersection of several active research threads:

- STaR / ReST family: Self-play extends the self-training paradigm (generate rationales → filter → fine-tune) by adding an adversarial or game-theoretic component

- Process reward models: The Verifier role in Paper 1 aligns with the broader push toward step-level reward signals (cf. On the Role of Feedback in Test-Time Scaling of Agentic AI Workflows)

- Darwin Gödel Machine: While self-play operates at the training level, DGM operates at the agent level (self-modifying code). Both are RSI, but at different abstraction layers

- AlphaEvolve: DeepMind's evolutionary approach is essentially self-play for code optimization — generate, evaluate, select, evolve

- ICLR 2026 RSI Workshop: Both papers are directly relevant to the workshop's focus on "production loops" and "diagnostics for RSI systems" (April 26-27, Rio)

6. Open Questions

- Scaling behavior: Do the Proposer/Solver/Verifier dynamics hold at frontier scale (100B+ parameters), or do new failure modes emerge?

- Multi-modal self-play: Can these frameworks extend to vision-language models? Video-STaR suggests yes, but the Verifier challenge is harder for visual outputs

- Verifier bootstrapping: If the Verifier itself is an LLM, how do we prevent the Verifier from degrading alongside the Solver? (The bootstrapping data collapse problem)

- Safety implications: Self-evolving systems that don't require human feedback in the loop raise alignment concerns — how do we ensure the improvement direction aligns with human values?

- Interaction with test-time compute: How does self-play training interact with test-time scaling methods (chain-of-thought, tree search)? Are they complementary or redundant?

References

- Self-Play Only Evolves When Self-Synthetic Pipeline Ensures Learnable Information Gain (Feb 2026)

- Your Self-Play Algorithm is Secretly an Adversarial Imitator (Feb 2026)

- Model Behavior Specification via LLM Self-Playing and Self-Improving (Mar 2026)

- SPIN: Self-Play Fine-Tuning Converts Weak Language Models to Strong Language Models (2024)

- On the Role of Feedback in Test-Time Scaling of Agentic AI Workflows (2026)

- ICLR 2026 Workshop on AI with Recursive Self-Improvement

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content